Having simplified access to Amazon Redshift from Amazon SageMaker and Jupyter notebooks.Building your ETL pipelines with AWS Step Functions, Lambda, and stored procedures.Running your query one time and retrieving the results multiple times without having to run the query again within 24 hours.Designing asynchronous web dashboards because the Data API lets you run long-running queries without having to wait for it to complete.

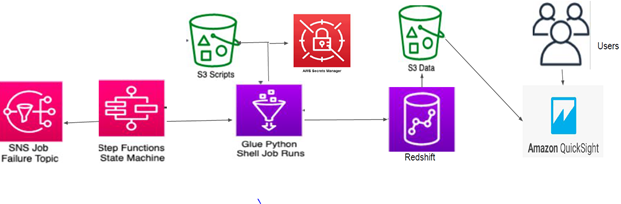

Building a serverless data processing workflow.For example, you can run SQL from JavaScript. This enables you to integrate web service-based applications to access data from Amazon Redshift using an API to run SQL statements. Accessing Amazon Redshift from custom applications with any programming language supported by the AWS SDK.It’s applicable in the following use cases: The Amazon Redshift Data API is not a replacement for JDBC and ODBC drivers, and is suitable for use cases where you don’t need a persistent connection to a cluster. Integration with the AWS SDK provides a programmatic interface to run SQL statements and retrieve results asynchronously. The Data API federates AWS Identity and Access Management (IAM) credentials so you can use identity providers like Okta or Azure Active Directory or database credentials stored in Secrets Manager without passing database credentials in API calls.įor customers using AWS Lambda, the Data API provides a secure way to access your database without the additional overhead for Lambda functions to be launched in an Amazon Virtual Private Cloud (Amazon VPC). Your query results are stored for 24 hours. The Data API is asynchronous, so you can retrieve your results later. The Data API takes care of managing database connections and buffering data. Instead, you can run SQL commands to an Amazon Redshift cluster by simply calling a secured API endpoint provided by the Data API. The Data API simplifies access to Amazon Redshift by eliminating the need for configuring drivers and managing database connections. The Amazon Redshift Data API simplifies data access, ingest, and egress from programming languages and platforms supported by the AWS SDK such as Python, Go, Java, Node.js, PHP, Ruby, and C++. The following diagram illustrates this architecture. The Amazon Redshift Data API enables you to painlessly access data from Amazon Redshift with all types of traditional, cloud-native, and containerized, serverless web service-based applications and event-driven applications. We also explain how to use AWS Secrets Manager to store and retrieve credentials for the Data API.

This post explains how to use the Amazon Redshift Data API from the AWS Command Line Interface (AWS CLI) and Python. This makes it easier and more secure to work with Amazon Redshift and opens up new use cases. With Amazon Redshift Data API, you can interact with Amazon Redshift without having to configure JDBC or ODBC. Tens of thousands of customers use Amazon Redshift to process exabytes of data per day and power analytics workloads such as BI, predictive analytics, and real-time streaming analytics.Īs a data engineer or application developer, for some use cases, you want to interact with Amazon Redshift to load or query data with a simple API endpoint without having to manage persistent connections. Choose Policies, and then choose Create policy.ģ.This post was updated on July 28, 2021, to include multi-statement and parameterization support.Īmazon Redshift is a fast, scalable, secure, and fully managed cloud data warehouse that makes it simple and cost-effective to analyze all your data using standard SQL and your existing ETL (extract, transform, and load), business intelligence (BI), and reporting tools. Create an IAM role in the account that's using Amazon S3 (RoleA)Ģ. If they're in different Regions, then you must add the REGION parameter to the COPY or UNLOAD command. Note: The following steps assume that the Amazon Redshift cluster and the S3 bucket are in the same Region. For example, if you're using the Parquet data format, your syntax looks like this: copy table_name from 's3://awsexamplebucket/crosscopy1.csv' iam_role 'arn:aws:iam::Amazon_Redshift_Account_ID:role/RoleB,arn:aws:iam::Amazon_S3_Account_ID:role/RoleA format as parquet Resolution However, there might be some changes in the COPY and UNLOAD command syntax while performing these operations. Note: These steps work regardless of your data format. Test the cross-account access between RoleA and RoleB. Create RoleB, an IAM role in the Amazon Redshift account with permissions to assume RoleA.ģ. Create RoleA, an IAM role in the Amazon S3 account.Ģ. These steps apply to both Redshift Serverless and Redshift provisioned data warehouse:ġ. To access Amazon S3 resources that are in a different account from where Amazon Redshift is in use, perform the following steps.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed